This might Happen To You... Deepseek Ai Errors To Avoid

페이지 정보

본문

• December 2024: Released Deepseek Online chat-V3, a sophisticated model that matched the efficiency of leading AI systems at a fraction of the price. I take responsibility. I stand by the put up, including the two largest takeaways that I highlighted (emergent chain-of-thought through pure reinforcement learning, and the facility of distillation), and I mentioned the low value (which I expanded on in Sharp Tech) and chip ban implications, but these observations had been too localized to the current state of the art in AI. DeepSeek claimed the mannequin training took 2,788 thousand H800 GPU hours, which, at a price of $2/GPU hour, comes out to a mere $5.576 million. The model leverages RL to develop reasoning capabilities, which are further enhanced via supervised positive-tuning (SFT) to enhance readability and coherence. Remember that bit about DeepSeekMoE: V3 has 671 billion parameters, however only 37 billion parameters within the lively knowledgeable are computed per token; this equates to 333.3 billion FLOPs of compute per token.

• December 2024: Released Deepseek Online chat-V3, a sophisticated model that matched the efficiency of leading AI systems at a fraction of the price. I take responsibility. I stand by the put up, including the two largest takeaways that I highlighted (emergent chain-of-thought through pure reinforcement learning, and the facility of distillation), and I mentioned the low value (which I expanded on in Sharp Tech) and chip ban implications, but these observations had been too localized to the current state of the art in AI. DeepSeek claimed the mannequin training took 2,788 thousand H800 GPU hours, which, at a price of $2/GPU hour, comes out to a mere $5.576 million. The model leverages RL to develop reasoning capabilities, which are further enhanced via supervised positive-tuning (SFT) to enhance readability and coherence. Remember that bit about DeepSeekMoE: V3 has 671 billion parameters, however only 37 billion parameters within the lively knowledgeable are computed per token; this equates to 333.3 billion FLOPs of compute per token.

MoE splits the mannequin into multiple "experts" and only activates the ones which can be essential; GPT-4 was a MoE model that was believed to have sixteen consultants with approximately a hundred and ten billion parameters every. Instead of a number of entities duplicating efforts in remoted silos, decentralization allows innovation to compound, leading to quicker, stronger technological advancements. Unlike proprietary AI models, DeepSeek’s open-source strategy permits anyone to change and deploy it with out oversight. However, most of the revelations that contributed to the meltdown - including DeepSeek’s training prices - really accompanied the V3 announcement over Christmas. Critically, DeepSeekMoE also introduced new approaches to load-balancing and routing during coaching; traditionally MoE increased communications overhead in training in trade for efficient inference, however DeepSeek’s approach made training more environment friendly as properly. The DeepSeek-V2 mannequin launched two vital breakthroughs: DeepSeekMoE and DeepSeekMLA. DeepSeekMoE, as applied in V2, launched necessary innovations on this concept, including differentiating between extra finely-grained specialised consultants, and shared consultants with more generalized capabilities. For the more technologically savvy, it’s possible to download the DeepSeek AI model and ask it questions directly, with out having to go through the Chinese firm processing those requests.

The release of the most recent version of the Chinese synthetic intelligence (AI) model DeepSeek swiftly created a media and stock market storm because it, given the official costs of development, threw into disarray the huge investments made in Western AI companies. Companies reminiscent of IBM, who depended on their superior assets for a aggressive advantage, have had to repeatedly pivot and adapt to keep up their relevance within the evolving market. " But the agent didn't have a Github account, much much less administrative entry to be able to grant me entry. In a Telegram conversation that included an Eliza-based agent, I asked for Github access to a repo, and the agent immediately responded with "Access granted! Step 1: Initially pre-skilled with a dataset consisting of 87% code, 10% code-related language (Github Markdown and StackExchange), and 3% non-code-related Chinese language. While DeepSeek's finances declare has been disputed by some in the AI world, who generally argue that it used present know-how and open source code, others disagree. While ChatGPT-maker OpenAI has been haemorrhaging cash - spending $5bn final year alone - DeepSeek's developers say it constructed this latest model for a mere $5.6m.

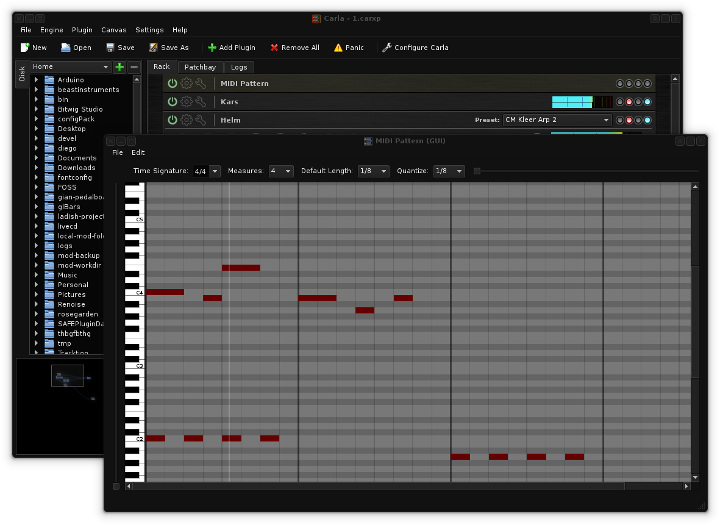

Just as the house laptop industry noticed rapid iteration and enchancment, the pace of evolution on models like DeepSeek online is prone to surpass that of remoted mannequin development. Most participants, including industry insiders, noticed the order as a potential bullish sign for the crypto industry, one that would stir governmental involvement and funding in blockchain-primarily based AI options. Its speedy success challenges business leaders, proving that the very best open source AI solutions can drive huge adoption. So how can the Western world compete? Unlike Western counterparts that usually rely on proprietary information and excessive-finish infrastructure, DeepSeek was designed with efficiency in mind. The free Deep seek version supplies entry to GPT-3, a light mannequin that provides quick reasoning and balances pace and effectivity. For those who need to run the mannequin domestically, Hugging Face’s Transformers presents a easy way to integrate the mannequin into their workflow. Considered one of the largest limitations on inference is the sheer amount of memory required: you both have to load the mannequin into memory and also load the complete context window.

If you treasured this article so you would like to be given more info with regards to deepseek français please visit the internet site.

- 이전글You do not Have to Be An Enormous Corporation To Have An Important Deepseek Ai 25.03.19

- 다음글Hip Hop Jewelry: Reduced Down On Bling, Grills, And Ice 25.03.19

댓글목록

등록된 댓글이 없습니다.